BAKERSFIELD, Calif. (KERO) — As artificial intelligence continues making its way into classrooms, some parents of K-12 students say they worry their children could be misled by what they see online.

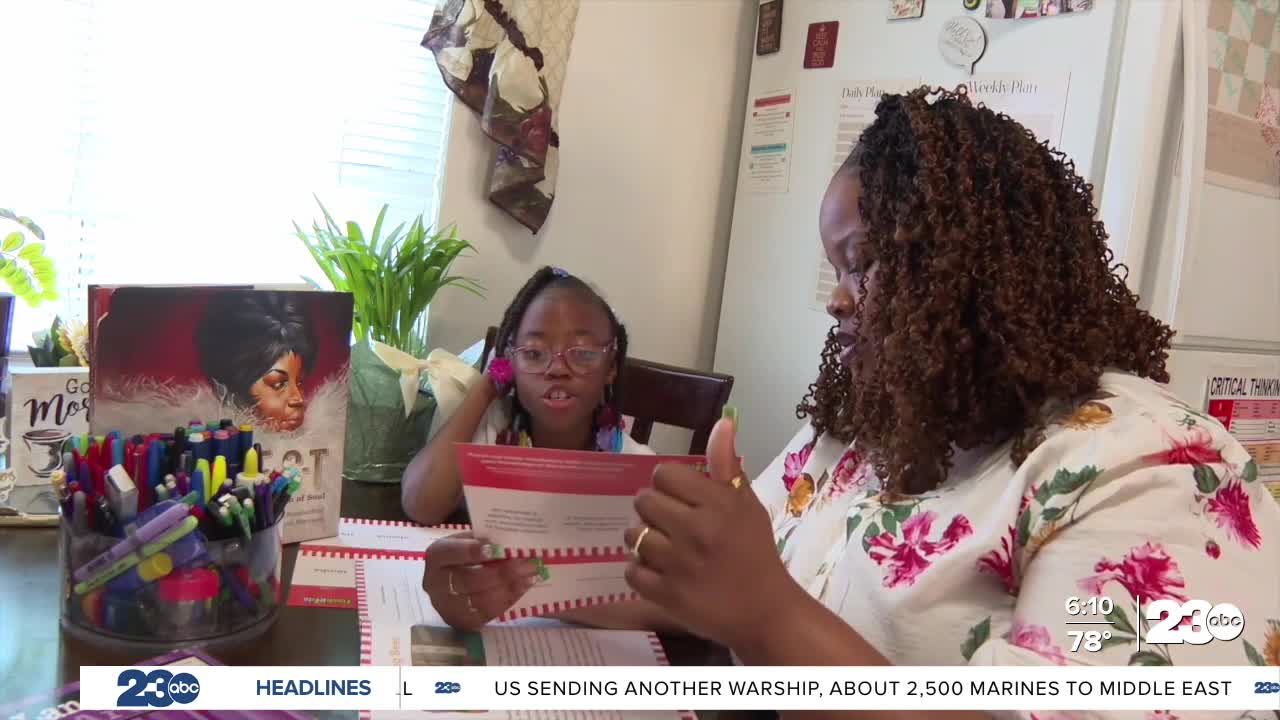

For parents like Tela Herbert, the concern became clear when her 7-year-old daughter watched a video online that appeared real at first.

“She looks at something it was a video and she was like oh mom this is so sad it was a lady getting robbed and you can tell as the gun had hit it was so fake,” Herbert said.

Herbert’s daughter, My’Kayla Lewis, has autism, dyslexia and ADHD. Herbert said that can make it harder for her daughter to distinguish what is real from what is not online. Because of that, she prefers her daughter spend more time reading books instead of using screens.

“Sometimes it can become diluted for her my thing is what are they being exposed to what if they can’t tell real from fake and theres dangers out there?” Herbert said.

Concerns like Herbert’s are part of the reason California lawmakers passed SB 942, known as the AI Transparency Act.

The law aims to increase transparency around artificial intelligence, though it is not yet fully in effect. One provision would require digital signatures on devices such as cameras and smartphones to help verify whether images are authentic. Another provision focuses on identifying AI-generated content by analyzing metadata, the file information attached to photos or videos.

Cybersecurity expert Matthew Smith said the approach has limitations.

“The biggest problem with Meta Data is that it can be edited you can change it so if the meta data is supposed to say it was created by open AI or chatgpt and I’m someone who is making AI content look real or pass off as real I can edit that meta data to say it was produced by an I phone,” Smith said.

Because metadata can be altered, Smith said it may be difficult to rely on it as proof that an image or video was created by artificial intelligence.

For Herbert, the concerns extend beyond online content.

Some Kern County school districts incorporate computers into their curriculum and allow tools such as ChatGPT in the classroom.

“When she comes home she’s learning songs she’s learning dances things that I don’t allow her to have access to,” Herbert said.

Herbert said her daughter has also picked up new phrases using the tool.

“Sometimes you can access say if your friend says something and you don’t know what language it is my friend told me something like callarse la boca that means shut your mouth,” Lewis said.

According to the Bakersfield City School District, students must use district technology and digital tools in compliance with the Responsible Use Policy and all applicable school rules, board policies and administrative regulations.

Herbert said she believes clearer safeguards around artificial intelligence are needed, particularly in schools.

“I understand that AI is important I understand that the world is changing however tools in one mind can definitely be a weapon in someone else’s,” Herbert said.

Cybersecurity experts say parents should stay involved in how their children use technology by setting boundaries at home and communicating with teachers about how digital tools are used in the classroom.

Stay in Touch with Us Anytime, Anywhere: